Snellius: the National Supercomputer

Advantages

Superfast

Large collection of tools and libraries

Data storage

Do you have a question about the National Supercomputer? Get in touch.

Simulation and modelling

Do you work with large and complex models that require a lot of computing power? The National Supercomputer provides that with a large number of super-fast processors. The system is ideally suited for large-scale experiments, such as simulations and modelling. These require a lot of processing power and memory usage, but also communication between the different processors. An important feature of Snellius is its fast internal network.

Computing power

Snellius runs on Linux. Besides AMD processors, the system also features GPGPUs (General Purpose Graphics Processing Units). These accelerators combine the processing power of graphics cards (GPUs) with that of CPUs. In addition, Snellius has 'fat nodes' with more memory space (1 TB) and 'high-memory nodes' (4 TB and 8 TB of memory space).

Tools and libraries

As a researcher, you can make use of a large collection of tools, compilers and libraries. Are you doing research or experiments in the field of machine learning, e.g. neural networks? The libraries and tools on Snellius make it a lot easier.

Data storage

As standard, you get 200 gigabytes of disk space for your own files (home file system). We back this up daily. You can also use a temporary storage capacity of 8 terabytes. We do not back this up and after two weeks these files are automatically deleted. Do you need additional storage space? We can arrange that for you.

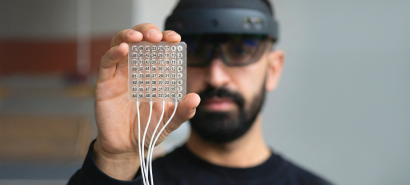

Meet Snellius! Virtual tour

Bekijk de virtuele rondleiding door Snellius, inclusief alle technische details over de racks en GPU nodes.

This service is ISO 27001-certified

This means we meet the high requirements of this international standard on information security.

Information for users

Related services

-

Visualisation

ProductWe support you in using various interesting visualisation options. For example, visualising large networks or flight paths of birds. With remote visualisation, you can view large datasets on your desktop without having to send the data to your computer. Product

Product -

High-performance data processing

ProductDo you want to process and store large amounts of data? Our team of experts will support you in using our high-throughput data processing systems and storage solutions. Product

Product -

SURF Consultancy

ProductWe help you on your way with ict-solutions for research and ict-infrastructure. Think about visualising your data, optimising software or designing and setting up computing facilities.

Product

Product