Cloud Research Consultancy

Supporting your research at every stage

Advantages

Guiding research teams in digital environments

Helping you choose the right platforms and tools

Design and build cloud-native research systems

Getting started with Cloud Research Consultancy

If you’re new to research IT or cloud computing, start with our Cloud Guide – it explains cloud service models, responsibilities, cost management and risk control so you can plan your project confidently.

The Cloud Delivery Service provides secure, managed access to public cloud platforms. Combined with our Cloud Research Consultancy, it gives you both a reliable foundation and expert support to build, deploy and operate your research environments efficiently.

We focus on projects that offer innovative value, advancing your research while also enabling SURF to develop new services, create reusable solutions, or provide capabilities that benefit the wider research community.

Researchers can also access a shared Kubernetes cluster through the consultancy, providing a flexible platform for cloud-native workflows and scalable applications.

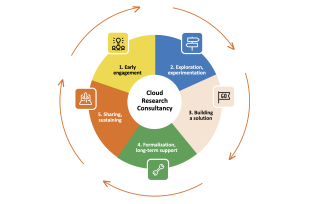

Project lifecycle phases

SURF’s Cloud Research Consultancy guides your team through every stage of cloud-based research – from initial ideas to operational systems. The following phases show how we support projects from early concepts to fully operational research environments.

1. Early engagement

We work with your team to clarify research goals, technical needs and constraints. Even with a rough idea or full proposal, we help define infrastructure requirements and explore potential collaborations.

2. Exploration, experimentation

We support proof-of-concepts and feasibility studies to test tools, workflows and cloud setups before full deployment. This helps identify the most effective approach for your research.

Example case study: Safer cities with smart bikes, edge computing and public clouds

3. Building a solution

Once the project direction is clear, we design and deploy a research environment tailored to your needs. Deployments can use SURF infrastructure, public cloud platforms or hybrid setups – with clear responsibility shared between SURF, your institution and your research team.

4. Formalization, long-term support

We provide guidance and technical support to ensure your system remains secure, functional and scalable over time. This includes planning for sustainability and maintenance of research environments. .

Example case studies:

From simulation to reality: The Green Village's data platform as an engine for innovation

How digital heritage was migrated to an open and transparent Kubernetes platform

5. Sharing, sustaining

When a solution demonstrates lasting value, we capture lessons learned, create reusable components or templates, and share knowledge with the research community. This supports innovation and reduces duplication across institutions.